Hiring Is a Prediction Problem: Rethinking Recruitment as a Data-Driven Decision System

Fri, Jan 23, 2026

The most expensive decisions your company makes? They're not about capital allocation or market strategy. They're about people. In data-driven hiring, every hire is a high-stakes bet on someone's future performance, a forecast you're making with incomplete data, under time pressure, with consequences that play out over years.

Yet most organizations still treat hiring like it's an art form. Resumes get screened by keyword matches. Interviews run on gut instinct. Final decisions emerge from fragmented debates where whoever argues loudest usually wins. When these hires don't work out (and research shows 80% of employee turnover traces back to poor hiring decisions), nobody blames the system. They blame the candidate, the hiring manager, or just chalk it up to bad luck.

Here's the actual problem: Hiring has always been a prediction problem, not an interview problem. And most companies are using prediction methods that barely beat random chance.

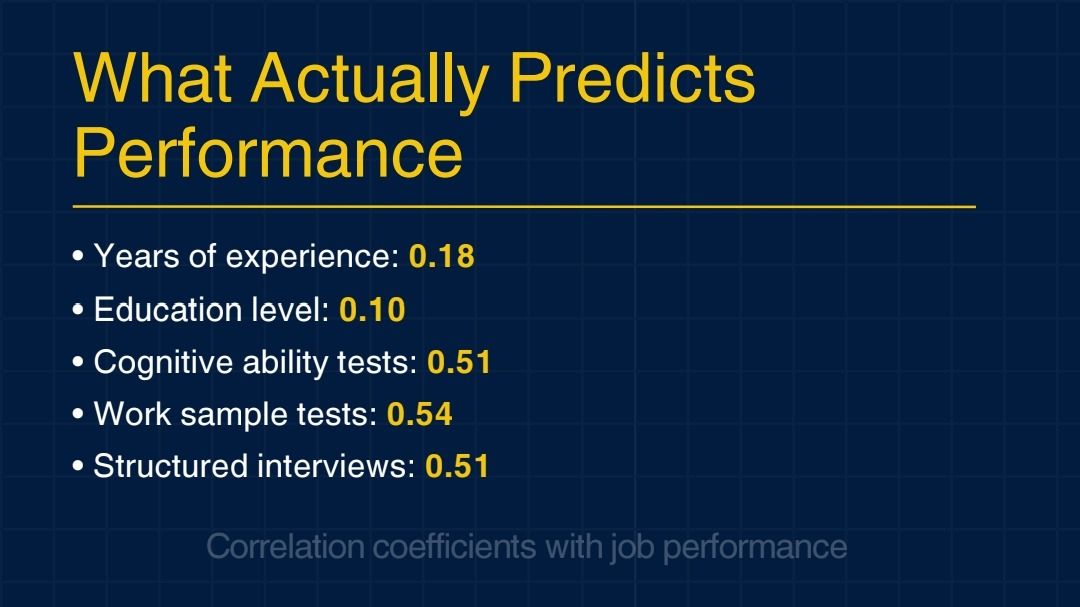

Look at the evidence. Schmidt and Hunter's landmark meta-analysis examined 85 years of personnel research and found that years of experience correlates just 0.18 with job performance. Education level? 0.10. That "good feeling" you get from an unstructured interview? Around 0.38. Compare that to structured assessments and cognitive ability tests, which achieve 0.51 to 0.63 predictive validity, meaning they're dramatically better at forecasting who will actually succeed.

The gap isn't small. It's the difference between guessing and knowing. Between hiring as hopeful intuition versus hiring as rigorous forecasting.

The U.S. Department of Labor puts the cost of a single bad hire at roughly 30% of first-year salary. Harvard Business Review research suggests the real damage can hit five times annual compensation once you factor in lost productivity, team disruption, and the cost of rehiring. For a $100,000 role, that's a potential $500,000 mistake—and that's just one position.

But here's what shifts the equation: Organizations that reframe hiring as structured prediction (using validated signals, decision science, and systematic evaluation) consistently outperform those stuck with traditional methods. They hire faster, retain longer, and build stronger teams. Unilever cut time-to-hire by 75% and improved diversity by 16%. ADT Security reduced early attrition by 10% while tripling recruiter efficiency. Fractal Analytics (the company behind iqigai) saw hiring effectiveness increase 3× and time-to-hire drop 60%.

These aren't aspirational numbers. They're documented results from treating hiring as the forecasting problem it actually is.

This article breaks down why traditional hiring falls apart at scale, what the research actually says about predictive validity, and how modern organizations are engineering hiring systems that work. If you're still relying on resumes and gut-feel interviews to make six-figure bets on people, you're competing with one hand tied behind your back.

The question isn't whether to adopt evidence-based hiring anymore. The research settled that decades ago. The real question is how fast you can build the infrastructure that makes it practical.

Let's look at what that infrastructure actually involves, and why it matters more than most leaders realize.

Why Traditional Hiring Collapses Under Scale

Human judgment works pretty well in small doses. A founder interviewing their first ten employees can lean heavily on pattern recognition and personal involvement. But as organizations scale, this approach falls apart fast. Cognitive load piles up. Consistency disappears. Two interviewers assess the same candidate and walk away with opposite conclusions, not because either is incompetent, but because unstructured judgment varies wildly under pressure.

The data backs this up consistently. Research on interviewer reliability shows that without structure, evaluations of the same candidates diverge significantly. What one interviewer calls "strong strategic thinking," another interprets as "vague and unfocused." This isn't traditional bias we're talking about. It's noise, the term Daniel Kahneman uses for unwanted variability in judgments that should be consistent.

The problem gets worse at volume. Recruiters screening hundreds of applications can't maintain deep analysis on each one. Interviewers running back-to-back sessions hit decision fatigue. Hiring committees debate forever because they're working from different evaluation criteria. The system doesn't fail because people aren't trying hard enough. It fails because it's asking humans to do something they're structurally bad at: making repeated, consistent predictions without any decision infrastructure.

Schmidt and Hunter's meta-analysis (which synthesized 85 years of personnel selection research) found that unstructured interviews correlate only 0.38 with job performance. Meanwhile, structured interviews combined with cognitive ability tests hit 0.63 predictive validity. That gap isn't marginal. It's the difference between guessing and actually forecasting.

From Stories to Signals: What Actually Predicts Performance

Resumes tell stories. Interviews amplify those stories. But narratives, no matter how compelling, turn out to be weak predictors of job performance. A candidate who articulates their experience with confidence might excel, or might not. A candidate with impressive credentials might fit your needs perfectly, or completely miss the mark.

This doesn't mean resumes and interviews are useless. It means they've been misused. The goal isn't to eliminate human judgment. It's to stabilize it. Interviews still have value, but their role needs to shift from being the primary discovery mechanism to serving as a validation layer. Resumes can provide basic qualification filters, but they shouldn't be driving selection decisions.

This is where signal extraction becomes crucial. Modern hiring systems don't evaluate narratives. They extract predictive signals and model probabilities. Instead of asking "does this resume look impressive?" they ask "what does this candidate's demonstrated behavior actually predict about future performance in this specific context?"

Schmidt and Hunter's research lays out the hierarchy clearly:

The data doesn't lie: demonstrated ability outperforms credentials by a massive margin.

iqigai's 360° Assessment platform puts this insight into practice. Rather than relying on resume keywords, candidates demonstrate actual competencies through domain-specific simulations, cognitive assessments, and behavioral tasks (all validated with AI proctoring to maintain integrity). This isn't just theory. Unilever eliminated resume-based screening in favor of game-based assessments and saw applications double, time-to-hire drop from four months to four weeks, and workforce diversity climb by 16%.

When you measure what actually predicts success instead of proxies for prestige, hiring quality transforms.

Role Definition as Prediction Design

Most hiring failures start before the interview even begins, when the role itself stays poorly defined. Organizations write job descriptions aspirationally, listing ideal traits rather than empirically modeling what predicts success. This vagueness ripples through the entire process.

When success criteria aren't clear, every interviewer brings their own interpretation. One prioritizes technical depth. Another values collaboration. A third emphasizes execution speed. Without alignment, hiring becomes a negotiation of preferences rather than evidence-based decision-making.

Role definition needs to function as signal design. What behaviors do your top performers consistently show? What skills actually drive measurable outcomes? What environmental factors help or hurt performance? Answering these questions requires looking backward: examining past hires, identifying patterns, building models grounded in actual performance data.

This is where iqigai's Recruiter Copilot creates immediate value. Rather than asking hiring managers to intuit requirements, the system analyzes historical performance data, surfaces patterns across high-performing employees, and auto-generates job descriptions anchored in evidence. The output isn't just better-written prose. It's a clearer prediction target. You're not guessing anymore what "strong communicator" means. You're defining it based on behaviors that correlated with success in your previous hires.

McKinsey research confirms that organizations with clear, performance-based role definitions see significantly lower early attrition and faster time-to-productivity.

Structured Interviews as Signal Validation, Not Discovery

Interviews still have value, but their purpose needs to shift. Instead of trying to discover talent from scratch, use interviews to validate signals that assessments have already surfaced. The conversation becomes more focused, more consistent, and ultimately more predictive.

Structure is what makes this work. Unstructured interviews (where each interviewer asks whatever comes to mind) introduce massive variance. Structured interviews, where every candidate answers the same core behavioral questions, double predictive accuracy according to meta-analytic research published in Personnel Psychology.

Google implemented structured interviews with standardized questions and committee evaluation, hitting roughly 0.65 correlation with on-the-job performance (way above typical unstructured approaches). The Society for Industrial and Organizational Psychology (SIOP) guidelines consistently recommend structured methods as best practice.

iqigai's platform enhances this by providing real-time AI insights during interviews. As conversations unfold, the system surfaces alignment patterns around cultural fit, role-specific competencies, and transferable skills. Interviewers aren't reading from scripts. They're guided by frameworks that ensure consistency while preserving human judgment.

This doesn't replace interviewers. It stabilizes them. When you know what signals to look for, when you're operating from shared evaluation frameworks, and when real-time support surfaces relevant patterns, interviews become far more reliable. Two interviewers assessing the same candidate are more likely to reach similar conclusions, not because they're being forced to agree, but because they're working from the same evidence base.

Decision-Making as Probability Synthesis

Final hiring decisions rarely involve clear choices between an obviously perfect candidate and an obviously wrong one. You're weighing probabilities and trade-offs. This candidate scores higher on technical depth but lower on cultural alignment. That candidate shows strong learning agility but has less domain experience. How do you actually decide?

Traditional hiring treats this as a debate. Stakeholders argue their positions. The loudest voice or the highest-ranking opinion usually wins. But debates optimize for persuasion, not accuracy.

Better decision-making treats hiring as probability synthesis. Algorithms aggregate signals (assessment scores, interview evaluations, predictive models) and present them in unified decision frameworks. iqigai's Matching Agent does exactly this, ranking candidates based on fit probability, predicted performance, and expected long-term ROI.

This doesn't eliminate human judgment. It organizes it. Hiring teams still make the final calls, but they're working from shared data rather than fragmented impressions. When disagreement comes up, it's grounded in evidence: "I'm weighting cultural alignment more heavily than technical skills because our retention data shows alignment predicts success in this role." That's substantively better than "I just have a good feeling about this person."

Research consistently shows that structured decision aggregation (combining multiple evidence sources through consistent frameworks) outperforms individual intuition. You're not ignoring instinct. You're making sure instinct operates on better information.

ADT Security implemented data-driven matching and saw immediate impact: 10% reduction in 90-day attrition, 13% overall attrition decrease, 50% faster time-to-hire, and recruiters handling 3× the candidate volume. These aren't marginal improvements. They're structural transformations in hiring quality.

Why Prediction Accuracy Compounds Financially

Better prediction doesn't just improve hiring quality in some abstract sense. It compounds financially. Early attrition is expensive. When prediction improves, retention improves. Candidates who genuinely fit the role, the team, and the organizational culture stick around past those critical first 90 days. And retention improvements cascade.

Stable teams build institutional knowledge. They onboard new hires more effectively. They execute more consistently. Frank Schmidt's research estimates that increasing selection method validity generates "millions of dollars" in gains over time, while low-validity methods quietly cost organizations millions in underperformance.

Fractal Analytics (the company behind iqigai) uses the platform internally for data and tech hiring. According to Rohini Singh, Chief People Officer at Fractal: "We've been using iqigai to hire early-career tech talent for 15+ months. It's 3× more effective at identifying high-conversion candidates. Hiring time reduced 60%. Now rolled out across all our hiring."

This isn't theoretical optimization. It's measurable business impact you can track.

The Infrastructure Advantage

The strongest hiring systems don't rely on exceptional interviewers or heroic recruiters. They rely on consistent processes that produce reliable predictions at scale. They treat hiring as the forecasting problem it actually is, and they engineer accordingly.

iqigai positions itself as a decision infrastructure for talent. Not productivity software, not another ATS, but the layer that extracts signals from noise, models probabilities from sparse data, and stabilizes hiring teams operating under pressure. The platform doesn't promise certainty. It promises to help you navigate uncertainty more effectively.

The complete system includes:

This isn't about working harder within broken systems. It's about building systems where good decisions become structurally easier to make.

Predictability Beats Polish

Hiring will never be perfect. You're always operating under some level of uncertainty. But perfection isn't the goal. Predictability is. A system that makes consistently good predictions will outperform one that occasionally makes brilliant ones.

Organizations adopting structured, evidence-based hiring consistently report:

These aren't aspirational targets. They're documented outcomes from organizations like Unilever, ADT, and Fractal Analytics that rebuilt hiring around prediction rather than intuition.

The choice isn't between human judgment and algorithms. It's between unsupported judgment and judgment augmented by evidence. The latter wins consistently.

For leaders evaluating hiring systems, the question isn't whether to adopt structured methods anymore—the research settled that decades ago. The question is how quickly you can implement infrastructure that makes those methods practical at scale.

Good hiring doesn't come from chasing perfection. It comes from asking better questions, extracting clearer signals, and being ruthlessly honest about what actually predicts success.

When those three things align, the right candidate rarely feels like a gamble. It feels like clarity.

Ready to reduce bad hire risk? See how iqigai's AI-powered hiring platform predicts fit before you make the offer.