The Infrastructure Gap Behind Skills-Based Hiring

Tue, Mar 10, 2026

The Paradox at the Heart of Skills-Based Hiring

Every senior leader today will tell you the same thing: we hire for skills, not degrees. It's the right answer. It's just not, in most organisations, the real one.

The intention is genuine. The belief is real. But belief doesn't move candidates through an ATS, and intention doesn't redesign a recruiter's workflow. What actually runs hiring, day to day, is infrastructure — the taxonomies, the tech stack, the verification systems, the incentive structures baked into decades of credential-era thinking. And that infrastructure was never built for skills-first hiring.

This is why the gap between stated policy and actual practice is so stubborn. When large firms publicly removed degree requirements from job postings, hires without degrees rose by just 3.5 percentage points. That's not a rounding error. That's a system reverting to its defaults the moment leadership attention moved on.

The research on what actually predicts job performance has been settled for decades. Schmidt and Hunter's landmark meta-analysis showed that education level correlates just 0.10 with on-the-job performance. Validated assessments and work samples? Between 0.51 and 0.54. The gap between what we measure and what predicts success is enormous, and most organisations know it.

So why hasn't hiring changed? That's the question this piece is really about. Not the philosophy of skills-based hiring, but the operational reality of why it breaks and what it would actually take to fix it.

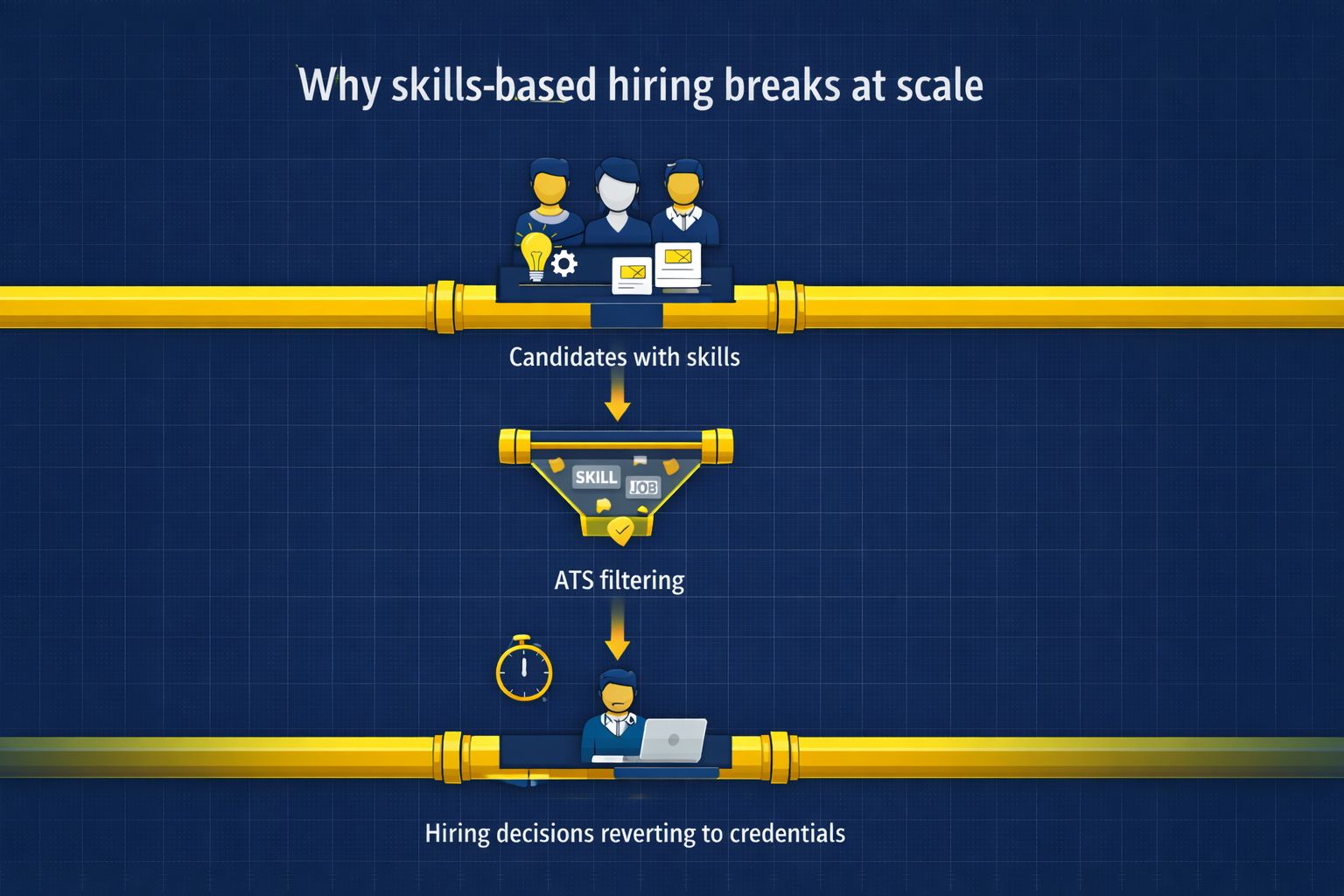

Why Skills-Based Hiring Breaks at Scale

The pilot always works. Hire for demonstrated ability, remove the degree filter for a cohort, run structured assessments instead of résumé screens, and the results are usually better. Faster hires, stronger performance, more diverse shortlists. Leaders see the numbers, declare the experiment a success, and then try to roll it out across the organisation.

That's where it falls apart.

The collapse is not random, and it is not a failure of commitment. It is what happens when a new strategy runs into old infrastructure. Degree filters persist not because hiring managers are lazy or biased but because they are functional. When a recruiter must process hundreds of applications under a deadline, a known credential is fast, legible, and defensible in a way that a skills assessment or a verified badge simply is not yet. Boards want clean audit trails. Legal teams want documented criteria. A university name or professional licence gives them both. Skills evidence, even when it predicts performance better, requires systems, standards, and shared vocabulary that most organisations haven't built.

The cost of this is visible in the data. Education level correlates just 0.10 with actual job performance, while validated assessments reach 0.51 to 0.54. Organisations are leaving an enormous predictive gap on the table, and they broadly know it. But knowing it doesn't fix the operational reality that human judgment systematically degrades at volume, a problem widely documented in research on noise in decision-making. Unstated role expectations mean two hiring managers evaluating the same candidate reach opposite conclusions, a pattern frequently observed in research on intuition versus structured decision-making in hiring. Without repeatable measurement systems underneath the process, skills-first hiring has no reliable mechanism to run on, which is why industrial-organizational psychology standards emphasize validated selection procedures.

This is the part that most skills-first conversations skip: removing a degree requirement is a policy change, not a systems change. When large firms dropped degree filters from postings, hires without degrees increased by just 3.5 percentage points because the recruiters, the ATS logic, the interview rubrics, and the incentive structures all stayed the same. The box changed. The behaviour didn't.

Intent is not the problem. Infrastructure is.

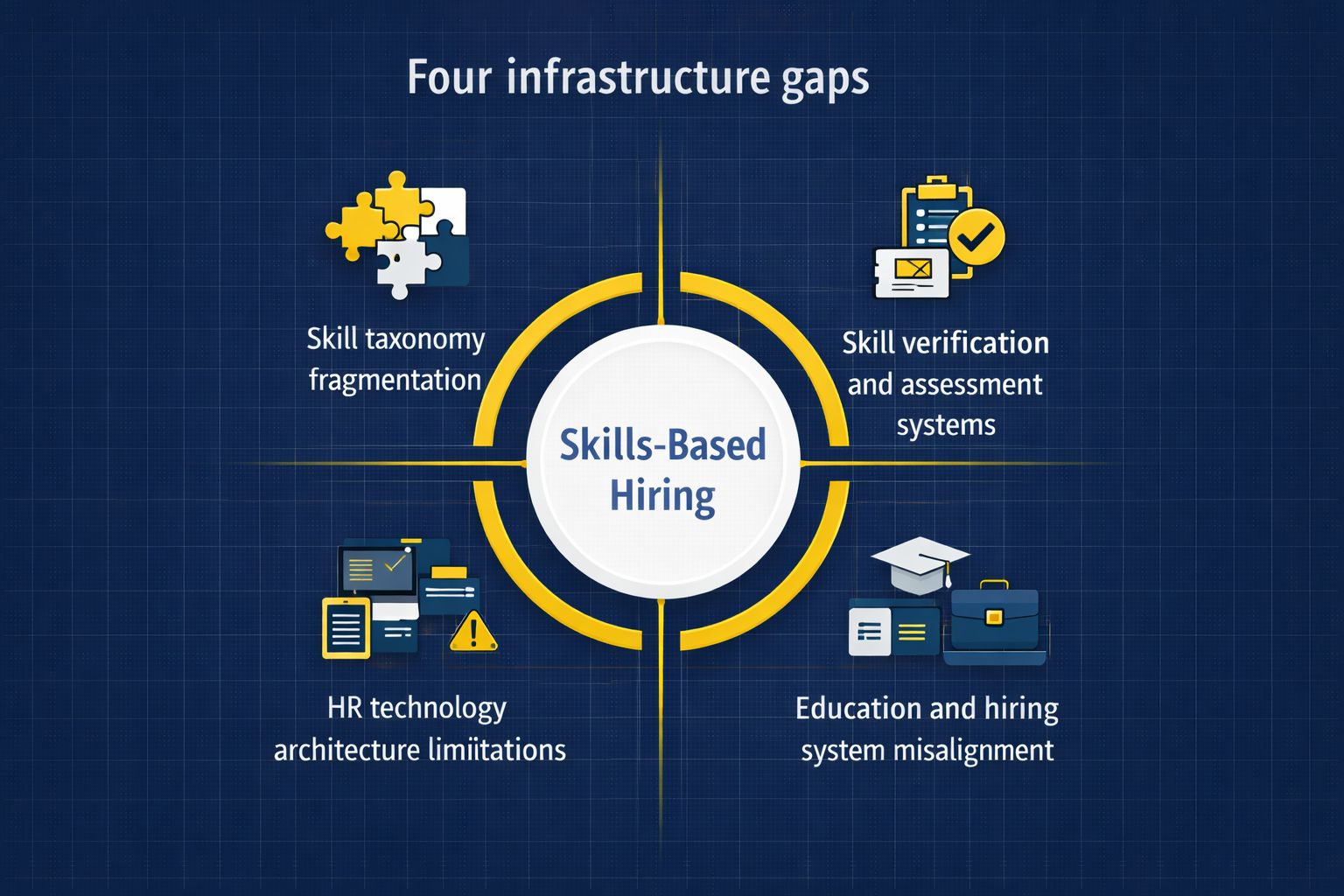

And the infrastructure has four specific failure points, each of which compounds the others.

Gap #1: There Is No Shared Language for Skills

There's a foundational problem at the heart of skills-based hiring that most organisations don't find until something breaks. Recruiters write job titles. Hiring managers describe behaviours. Learning platforms publish course outcomes. Labour statisticians publish occupational codes. Each of these is a reasonable attempt to describe human capability, and none of them maps cleanly onto the others.

The fragmentation runs deeper than it appears. LinkedIn's Skills Graph tracks tens of thousands of skill labels and the relationships between them, making it one of the most sophisticated real-time taxonomies in existence. It is also entirely proprietary. Government-backed frameworks like O*NET in the United States and ESCO across the European Union offer structured, publicly available vocabularies, but they update slowly and were never designed for the pace of modern hiring. National systems like India's NSDC add yet another layer with their own qualification packs and occupational standards. Each framework is useful within its own context. The problem is that none of them talks to each other.

In practice, this means that a candidate whose skills are tagged one way on a learning platform may never surface in a search that uses different labels for the same capability. It means that "advanced" on one system is "working proficiency" on another, making any comparison across platforms essentially arbitrary. It means that a verified credential earned through one provider cannot be reliably read by an employer's ATS because the underlying skill identifiers don't match. Skills data, in other words, is abundant but not portable, and portability is precisely what hiring at scale requires.

The organisations making progress on this are treating skills as a data architecture problem, not an HR problem. They are building one canonical internal taxonomy, mapping external vocabularies into it, and demanding that vendors support structured skill identifiers and exportable credential metadata rather than accepting free-text tags as a substitute. It is unglamorous infrastructure work, and it is exactly the kind of work that makes everything else possible.

Fixing the language problem doesn't solve skills-based hiring. But it converts an impossible matching problem into an engineering problem you can actually solve. And without solving it, the next gap has nowhere solid to build on.

Gap #2: Skills Are Hard to Verify and Easy to Fake

If skills are the language, assessments are the evidence. But the evidence collection system was never engineered as shared infrastructure. Tests, simulations, work samples, and proctored evaluations exist in abundance across organisations. What almost never exists is a way to make those results travel, accumulate, or mean the same thing from one team to the next.

The problem starts with how assessments are built. Most teams construct bespoke tests for their own hiring rounds, which means a candidate who demonstrates genuine capability in one process is essentially invisible to the next. There is no shared mapping between skills and assessments, no common rubric, no portable output. The proof evaporates inside a single hiring loop and has to be recreated from scratch every time.

It gets worse at volume. When hiring accelerates, assessments are the first thing that gets cut. They take time to administer, require infrastructure to run fairly, and demand trained evaluators to interpret. Under pressure, organisations revert to the signals that are fast and cheap: degree, employer brand, job title. The assessment pipeline, never robust to begin with, simply cannot keep pace with demand. And when candidates figure out that tests reward pattern recognition over genuine capability, they optimise accordingly, which erodes whatever signal the assessments were generating in the first place.

The stakes here are not abstract. Gartner projects that by 2028, one in four candidate profiles will be fraudulent, as AI tools make fabrication easier and harder to detect. The science of what works is not the problem. Most organisations simply cannot consistently deliver, verify, and defend assessments at scale, which is precisely why credentials remain the safer operational bet.

The organisations closing this gap are approaching it as a data problem. They are standardising the relationship between skills and assessments internally, building modular evidence blocks that hiring teams can reuse rather than rebuild, and adopting verifiable credentialing standards so that assessment outputs travel with candidates and can be checked cryptographically rather than taken on trust. None of this is technically complex. All of it requires deliberate investment that most organisations have not yet made.

Until assessments stop being one-off artifacts and start acting like reusable data, skills-first hiring will consistently outgrow its ability to prove its own claims. The organisations closing this gap are approaching it as a data problem rather than a testing problem. The first move is internal standardisation, which involves building a single map between skills and the assessments used to evaluate them, so that every hiring team is drawing from the same evidence library rather than designing from scratch. The second is modularisation. Breaking assessments into reusable units, a work sample paired with a scoring rubric, and a proctoring specification, that can be deployed across roles and teams without rebuilding. And lastly, adopting verifiable credentialing standards so that assessment outcomes travel with the candidate as cryptographically signed records rather than informal notes in a hiring manager's inbox. When those three things work together, assessment effort stops evaporating after each hiring round and starts compounding into an organisational asset. Which brings us to the system those assessments are supposed to feed into.

Gap #3: HR Tech Was Never Designed for Skills-First Hiring

The technology running most hiring processes was built for a different era. Applicant tracking systems, HRIS platforms, and legacy talent tools were architected around a simple model: people are described by degrees, job titles, and years of experience, and roles are filled by matching those fields. That model made sense when credentials were the primary signal. It is now the primary obstacle.

The rigidity runs deeper than most leaders realise until they try to change it. Legacy systems don't store structured skill identifiers or proficiency levels because those concepts weren't in scope when the platforms were designed. Screening still runs on keyword matching and boolean filters, which means a candidate whose skills are described in slightly different language than the job posting gets eliminated before a human ever sees them. The U.S. Chamber of Commerce has documented this directly: ATS systems routinely filter out qualified candidates from non-traditional backgrounds, not because they lack capability, but because their profiles don't match an increasingly inflated checklist of exact credential requirements.

The interoperability problem compounds this. Most HR stacks are a patchwork of vendors, custom integrations, and manual data exports. Assessment outputs don't flow into candidate profiles as verifiable, structured data. So even when an organisation runs good assessments and generates meaningful evidence about a candidate's capabilities, that evidence rarely survives contact with the broader hiring system. The next decision gets made on credentials anyway.

Some vendors are catching up. Workday and Greenhouse have both added skills features in recent iterations, though many organisations still misunderstand what AI hiring systems actually solve. But a feature is not an architecture. Adding a skills tag to a candidate record doesn't change the underlying data model, doesn't make assessment outputs portable, and doesn't connect hiring to development systems in any meaningful way.

The organisations making real headway are treating this as a data contract problem: requiring vendors to support structured skill identifiers as a condition of procurement, adding a normalisation layer so taxonomy and assessment data speak a common language across hiring and analytics, and insisting that assessment results be treated as first-class data objects with provenance, not files in a folder nobody can query.

The technology to do this exists. What's missing is the organisational will to demand it. But even when that will exist, there's still the question of where the skills evidence comes from in the first place.

Gap #4: Education and Hiring Systems Are Poorly Coupled

The talent pipeline has a structural crack that most organisations treat as someone else's problem. Schools, bootcamps, and training providers optimize for credentials and completion metrics. Employers optimize for speed and risk reduction. The two systems have never been designed to talk to each other, and the gap between them shows up directly in hiring quality.

The numbers are stark. India's Economic Survey found that only 8.25% of graduates work in roles that match their qualifications. Not mismatched slightly — structurally misaligned. The India Skills Report puts overall graduate employability at around 42%, meaning more than half of degree-holders entering the workforce lack the measurable capabilities employers actually need. These aren't uniquely Indian problems. They are an extreme illustration of a universal pattern. When an entire education system optimises for credential production while the labour market shifts toward capability demonstration, a pattern repeatedly highlighted in future-of-jobs labour market research.

The underlying cause is a feedback loop that doesn't exist. Employers almost never share performance data with training providers in a form that allows curricula to be meaningfully revised. Without a continuous signal from the workplace, course designers default to breadth and certification coverage rather than the specific, demonstrable behaviours that predict early job performance. By the time a curriculum catches up to what the industry needs, the role definitions have moved on again. The result is that credentials keep accumulating while the capability gap quietly widens.

There is also a cadence problem that rarely gets named directly. Education is cohort-based and episodic. Enterprises need a continuous, scalable supply of job-ready people, something widely discussed in research on the future of work and workforce transformation. Those two rhythms are fundamentally mismatched, and no amount of curriculum reform fixes that without changing the relationship between employers and training providers from transactional to operational.

Policymakers are responding. The World Economic Forum has been actively pushing global skills-alignment frameworks for labor markets. National systems are being revised. But policy momentum moves at a different speed than a hiring pipeline that needs to perform next quarter. What actually closes the gap is treating education partnerships the way you treat any other operational dependency: with defined outputs, measurable conversion metrics, and shared performance data that lets both sides improve. Leaders who do this stop funding courses and start buying outcomes. That shift in framing changes everything about how the upstream pipeline behaves.

Taken together, these four gaps form a compounding system. A broken taxonomy makes assessments inconsistent. Inconsistent assessments can't integrate meaningfully into HR tech. HR tech that doesn't speak skills can't signal back to education what it actually needs. And so the credential remains the only thing that moves smoothly through the entire chain. Which raises an honest question: given all of that, why would anyone expect credentials to stop winning?

Why Credentials Still Win (and Probably Will, for a While)

It's worth being honest about something this conversation often avoids: credentials aren't just inertia. They are doing real work, and until that work gets done by something better, they will keep winning regardless of what the job posting says.

Consider what a degree or a recognisable employer brand actually provides in a hiring context. It is fast, collapsing a complex signal into a single legible token under time pressure. It is defensible, giving boards, legal teams, and auditors a clean, documentable criterion that survives a post-hire review. And it is portable, recognised across organisations, geographies, and industries without requiring any shared infrastructure to interpret. These are not trivial functions. They are precisely the functions that skills evidence, in their current state, cannot reliably replicate.

The incentive problem runs deeper still. Compensation structures, promotion ladders, and hiring panel composition were all designed around credentials. HR metrics still reward pedigree. Changing hiring behaviour without changing those underlying incentives is like adjusting the output without touching the inputs. The system simply reproduces itself.

There is also a network effect at work that tends to get underestimated. Universities, large technology employers, and professional bodies function as brand proxies for quality, and those proxies persist because hiring managers and referral networks keep reinforcing them. Every senior hire made through a prestigious credential strengthens the signal for the next one. The cycle is self-perpetuating precisely because it keeps working well enough.

None of this is an argument for preserving the status quo. It's a practical observation about sequencing. Credentials will remain the default until the alternatives can match them on speed, defensibility, and portability, and that requires infrastructure investment, not just policy change.

For leaders who want to start shifting the balance, three moves matter most. Map credentials to the skills they're being used as proxies for, so you can identify exactly where verified work samples or validated assessments could substitute without increasing organisational risk. Pilot replacement lanes on a small number of critical roles, running validated assessments in place of credential filters and tracking outcomes tightly enough to build an internal evidence base. And change the incentive structure by tying hiring and promotion metrics to downstream performance data rather than credential counts, because until the reward system shifts, individual behaviour won't.

The goal isn't to abolish credentials. It's to replace the specific functions they serve with systems that are faster, fairer, and more predictive, and to do it in ways the board can actually defend. Some organisations are already doing exactly that.

What Real Progress Actually Looks Like

The infrastructure gaps described in this piece are real, but they are engineering problems, not unsolvable ones. Organisations that treat skills-first hiring as a systems build rather than a policy shift are already seeing results that compound in ways credential-based hiring never could.

The foundation is a canonical skills graph that the organisation owns and controls, with external vocabularies mapped into it. LinkedIn's Skills Graph, O*NET, ESCO, and platform-specific tags are all useful inputs, but none of them should be the operating standard. When an organisation builds one single queryable dataset of skill labels, proficiency levels, and role relationships, fragmented matching problems become solvable engineering problems.

On top of that, assessments need to be modularised into reusable evidence units: a work sample paired with a rubric and a proctoring specification that any hiring team can deploy without rebuilding from scratch. When outputs are standardised and stored as verifiable data, they accumulate value across hiring rounds rather than evaporating after each one. A normalisation layer in the HR stack then connects these pieces, so that assessment outputs and learning records flow into candidate profiles as structured skill objects rather than PDFs nobody can query.

The organisations that have built even partial versions of this have seen results stack up in ways that are hard to attribute to anything else. Unilever replaced resume screening with validated assessments and saw time-to-hire drop from four months to four weeks while workforce diversity climbed 16%. ADT Security reduced 90-day attrition by 10% while tripling recruiter efficiency. Fractal Analytics reported hiring effectiveness three times higher and time-to-hire down 60%. These outcomes are what happens when assessment effort compounds into a system rather than disappearing into isolated hiring loops.

The last piece is governance. Skills infrastructure is a board-level investment, not an HR initiative. The metrics that matter are share of hires made via validated signals, early retention of non-credential hires, and ROI on assessment and training investment over time. Without visibility at that level, the initiative stays a pilot indefinitely.

When skills are named consistently, proven reliably, and carried portably, hiring stops being a series of isolated bets and becomes a measurable forecasting system. Credentials will not vanish overnight, but their functions can be replaced by better infrastructure. That is when skills-first hiring stops being a theory and starts becoming a predictable practice.

Conclusion: The Real Barrier Is Structural

Skills-based hiring hasn't stalled because leaders don't understand its value. It has stalled because most organisations are trying to run it on infrastructure designed for credentials, intuition, and speed rather than evidence, prediction, and scale. Degrees, brand names, and job titles continue to dominate hiring, not because they are accurate, but because they are fast, defensible, and interoperable inside today's systems. Until skills can move just as smoothly through hiring workflows, they will remain a secondary signal, no matter how loudly organisations claim otherwise.

Better hiring outcomes don't come from better intentions or isolated tools. They come from systems that make good decisions repeatable. When skills are named consistently, demonstrated through validated assessments, and integrated into everyday hiring workflows, the foundation of what we describe as a data-driven hiring decision system, hiring shifts from narrative judgment to probabilistic forecasting. That shift compounds financially through faster time-to-productivity, lower early attrition, and teams that actually scale.

This is why the skills transition is ultimately a leadership and infrastructure challenge, not an HR one. It demands ownership at the CEO and board level because it cuts across risk, compliance, workforce planning, and long-term competitiveness. Companies that invest now in skills infrastructure won't just hire differently. They will build talent advantages that are genuinely difficult to replicate. Those that wait will keep optimising broken proxies and paying for it quietly in churn, mis-hires, and lost growth.

The question is no longer whether skills-based hiring works. The evidence settled that years ago. The real question is whether your organisation is willing to build the systems that make it possible, before the talent market forces the issue for you.

The infrastructure gaps described in this piece are solvable, but they require the right systems underneath them. Iqigai is built precisely for this: replacing credential-era hiring infrastructure with validated signals, modular assessments, and decision frameworks that make skills-first hiring reliable at scale. If your organisation is ready to move from policy to practice, start here.